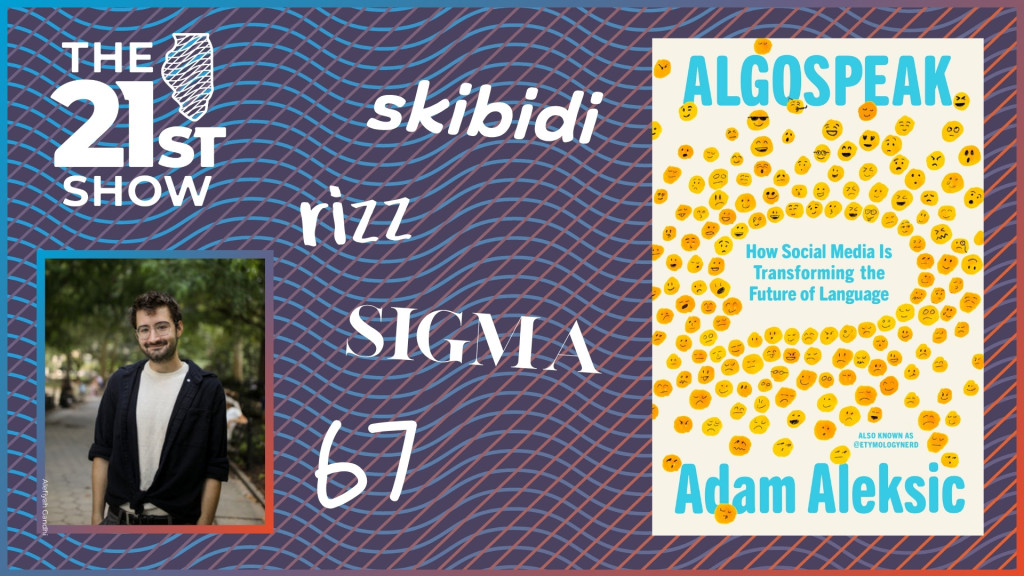

From ‘cool’ to ‘selfie’ to ‘slay’, linguist’s book takes a look at the evolution of language through lens of social media

Images Courtesy of Penguin Random House and Alefiyah Gandhi

// This is a machine generated transcript. Please report any transcription errors to will-help@illinois.edu. # Digital Content Editor - Transcript Refinement [00:00:00] Brian Mackey: Today on the 21st show, the English language has evolved slowly over the course of centuries. But the linguist Adam Alexic says the rise of the social internet and especially short-form video is accelerating that effect, teeing up an inflection point on par with the invention of writing or the dawn of mass communication. All this is the subject of his book, "Algospeak: How Social Media is Transforming the Future of Language." I'm Brian Mackey with Adam Alexic for the hour today on the 21st show, which is a production of Illinois Public Media, airing on WILL in Urbana, WUIS in Springfield, WNIJ in Rockford-DeKalb, WVIK in the Quad Cities and WSIU in Carbondale. But first, news. From Illinois Public Media, this is the 21st show. I'm Brian Mackey. One constant of history from the ancient world to modernity is that our language is continually evolving. Latin is not a primary tongue anywhere outside the Vatican, but it begat Spanish, Portuguese, French and Italian. Likewise, English was influenced by German, and it's clear our manner of communicating has evolved as well. In a few short centuries we've gone from "Thinkest thou that duty shall have dread to speak when power to flattery bows" to "We had the greatest six months of any president in the history of our country, and all the fake news wants to talk about is the Jeffrey Epstein hoax." That was, if you're keeping track, President Trump and William Shakespeare, though not in that order. The language rolls on. If you have young people in your life, you might have heard them use phrases such as skibidi or rizz or sigma. Maybe that's mumbo jumbo to you, kind of like the phrase mumbo jumbo was to someone in the past. But my guest today says that's just how language evolves. And as we continue through the internet age, more specifically the era of short-form video, the rate of change in our language is only going to accelerate. You might already know of Adam from his work online as @etymologynerd, where he posts about linguistics. You know how... [00:02:27] Adam Alexic: ChatGPT will overuse certain words like delve, commendable and meticulous? Well, now we have proof that that's starting to affect our real language. A new study just came out where they analyzed over 700,000 spontaneous spoken conversations, and what they found is that the overused AI words also started significantly being overused in our actual speech shortly after ChatGPT came out. We're simply saying "delve" at higher rates than before, especially when compared to control words like "examine" or "explore," which weren't overrepresented — and you can see those stay the same in our vocabulary. And on one level, this sort of makes sense. Humans are imitative learners, and we mimic the speech we see. So of course we're gonna start talking more like AI if that's what we're looking at. But on a much stranger level, this is actively bringing ChatGPT's biased representation of language closer to reality. [00:03:03] Brian Mackey: Adam joined us for an hour back in summer 2025. That means no calls today, but we did ask members of our texting group about this when it first aired. Tony in Champaign said, "I don't mind new words at all, as long as something like the Urban Dictionary can translate for me." We also heard from Lloyd in Danville, who said, "While words come and go with new social changes, I prefer preserving the English language. As an African American, the phrase 'right on' had multiple meanings. As Dave Chappelle says, 'Word' — it too has many applications." And now our conversation on the internet and how it's influencing our language. All right, Adam, let's start with kind of an icebreaker, if you will. You know, everybody has things about language that sort of grate on them, even people who accept change or even embrace change. Are there any words that you, you know, sort of have grown to hate or that, uh, you know, grind, set your teeth to grinding? [00:04:00] Adam Alexic: Well, of course, everybody makes their individual choices about how to use language, and that includes linguists themselves. We're trained in linguistics to not make any judgment calls on language, but then we also have to use language. So the more I look into words, the more I see, "Oh, you know, this word came from..." uh, this originally had like a racist origin or something. Maybe I don't want to use that one. And that's — but like, that's the only conscious decision usually I make. And that's still a subjective, you know, 'cause sometimes these words just lose meaning. And is it really bad to use them anymore? It comes down to individual judgment calls about how you should be using language. [00:04:32] Brian Mackey: Yeah, for me it's cliche that really gets me going. Like I hope listeners will never hear me use the word "impact" unless I'm talking about a vehicle crash or molars. [00:04:45] Adam Alexic: Here's one — the ChatGPT stuff. Like I try to talk less like [it], so I'm avoiding the word "delve" so it doesn't sound like I'm using AI. [00:04:53] Brian Mackey: Oh, that's a great point. Yeah. Well, we can get into how AI is gonna end all this as well. But all right, let's start where your book does, which is with this word "unalive." I got to admit, it's new to me, or it was new to me when I read about it in the book. Can you explain what that means for others who may be unfamiliar? [00:05:13] Adam Alexic: Yeah, it's a synonym of "kill" or "commit suicide" that was popularized because you can't really say "kill" on TikTok — they'll suppress your videos. So this word started out in a 2013 Spider-Man meme that got remixed in a 2018 Roblox meme, and then found a genuine place in this mental health community on TikTok where they needed a way to spread their stories and share resources. But it also became a part of the algospeak sociolect. Algospeak being speech used to evade algorithmic censorship, and sociolect being this social group that speaks a certain way. So we have this online community using the word "unalive" to mean "kill," and then it starts bleeding offline. And my book opens with the example of the Seattle Museum of Pop Culture last year, where they, for the 30th anniversary of Kurt Cobain's suicide, they didn't say that Kurt Cobain killed himself or committed suicide — they said that he unalived himself at 27. And you'll see kids in like middle schools writing essays on Hamlet's contemplation of unaliving himself, if we're getting into Shakespeare. But this is a real internet word bleeding into real life. And more than that, it's an example of algorithms literally shaping how we speak. But I start with that example because I think it's the most surface-level example we can point to and say, "Clearly, algorithms are shaping our speech." In fact, it's much more than that as well. [00:06:24] Brian Mackey: What is that word like? Break it down for us grammatically, right? "Unalive." Is it even like, I don't know, quote-unquote "correct"? Do you even accept that? [00:06:33] Adam Alexic: Well, it's correct in that it's used in a functional way — it's reproduced in this meaning. "Alive," you know, is "alive," and "unalive" — it kind of, it does make sense. People say it sounds like maybe Orwellian, or like 1984-speak. But in fact, we've been euphemizing language forever. What it's doing is it's a euphemism. And the way the kids are using it when they say, "Oh, you know, Hamlet thought about unaliving himself," it's because they're afraid of saying the word "suicide," which sounds like a scary word, admittedly. And so they turn to this more cheerful-sounding word for a serious concept, which, you know, that has its own judgment value — whether you think it's trivializing language or whether it makes it easier for these kids to have this discussion. But the fact is, we've been euphemizing language forever. That's why we say things like "kick the bucket," which also sounds a little goofy. [00:07:16] Brian Mackey: Yeah, no, that's a great point. One thing I hear in it is it removes the agency, right? Because technically some of the death we're talking about in this case is "de-aliving," right? Not necessarily "unaliving." Or maybe I'm making a distinction. [00:07:30] Adam Alexic: It's a reflexive, so you could say "he unalived himself." And I think there is — maybe it does remove agency. I don't know, it's your call again. I just like to tell people where these words come from, and maybe you can make your own decisions. But it's good to be aware of where they are coming from, and that is the algorithm right now. [00:07:46] Brian Mackey: Yeah, and like you said, we have no shortage of euphemisms for death. As journalists, we are trained to say someone died, right, so that there's no confusion among our listeners. When we would say someone "passed" or is "not with us anymore," you should not hear that in a news report. But like you say, there's a long history of coming up with... yeah. [00:08:03] Adam Alexic: So what works in the journalism context works differently in the TikTok context, in the same way I'm not using my TikTok voice right now because there's a different voice that works for this context. And I'm probably still talking too fast. [00:08:16] Brian Mackey: That's all right. It's gonna keep our listeners awake. Talk a little more, because I was really interested in that — like you mentioned that it's made to evolve or to, I should say, evade algorithms, right? Or the word rises because of that. But it really does create openings for mental health discussions among young people, you found. [00:08:37] Adam Alexic: Well, otherwise that discussion wouldn't be able to be had online at all. But yes, offline in some cases, kids are learning this word before the word "suicide" itself. They learn it from friends, they learn it from people online, they don't realize the context, and then they reproduce it. And in fact, it does help them talk about this because if they do know the word "suicide," it could be a very... yeah, a word you don't want to use. It sounds intimidating. [00:09:01] Brian Mackey: All right, maybe we can talk about the origins of some of the other, you know, words that are popular among Generation Alpha, such as skibidi or rizz or Ohio — not the state, I gather. [00:09:14] Adam Alexic: Ohio and skibidi are just nonsense words that they say because it sounds funny. And honestly, these trends are also sort of dying out. There's always new words coming about, and it's more about what the algorithm's doing and how is it bringing these words to us. So Ohio and skibidi, they really don't mean anything. They're just funny things that people say. Rizz is romantic charisma. And these are all quote-unquote "brainrot words." They're classified as part of this meme aesthetic of words that are supposed to rot your brain. And I should say also that neurologically, that's never true. There's never anything worse about one word than another word. But we have this perception, you know, that they belong to this kind of comedic genre of funny-sounding words, and we repeat them. And it's directly because of the algorithm. Once a word like rizz or skibidi starts trending, creators then hop onto that trend for a chance to go viral because algorithms push human trends. We naturally as humans experience fads and memes and trends — this has always been a thing. But algorithms compound that because they try to pay attention to what is trending in human populations. They push that, and then creators know that if they hop onto the algorithmic trend, the algorithm will push their videos more. So we replicate these words. I've made videos on the etymology of rizz and skibidi that have gotten millions of views because I knew that they would, because the algorithm pushes these videos. But as I make those videos and as other creators make these videos, we perform those words more into existence. And now they become more of a trend, and then the algorithm pushes them more. And we end up in this positive feedback loop of words hitting the mainstream much, much faster than they otherwise would. [00:10:44] Brian Mackey: Yeah. Well, and this is where you come to — I mean, the entire book is called "Algospeak" because the influence of algorithms is what you identify as this key factor. Maybe before we delve more into that — [delve] there you go, I had ChatGPT write this whole thing. Kidding, kidding. I do not have ChatGPT write my questions. But before you, uh, you know, before we get into that, we can dive into some of the other historic inflection points that you say revolutionize language. What are some of those? [00:11:15] Adam Alexic: Absolutely. Language is a story told through the tools we use to communicate. And this starts out before we have any writing — we had to tell stories still. We relied more on oral tradition. We would have rhyming and meter as the underlying infrastructure of how we remembered our stories. Then we were allowed to write things down in more persistent mediums — stone, tablet, and then we moved to paper. And now, "Oh, it's OK to add spaces between words because we're not as constrained on space. Now it's OK to like make chapters and segment our stories differently." And then we move to the printing press, which, you know, mass-democratizes in one way who can write things. There's more written vernacular in other languages than Latin, but at the same time there's more gatekeeping and more institutionalized forces governing language. And the way we tell stories again shifts. Then we move to the internet. The internet allows for the written replication of informal speech. You have text slang, you have people — nobody's regulating as much. And so we have all these new words come because of the internet. And now I think algorithms are another inflection point because they're a new infrastructure for how we're communicating, and that infrastructure is going to subtly shape our communication. [00:12:27] Brian Mackey: And talk a little more about how that works, right? I mean, are these algorithms actually detecting the use of the words? I mean, we're well beyond having to load up hashtags, right? Although people still... [00:12:39] Adam Alexic: Yes, hashtags are — I mean, functionally irrelevant because these algorithms analyze every single word that's spoken and appears on screen when you upload the video. So in the past, hashtags served the function of metadata — information about the content. Now every single word is also information about the content. Every single word you say influences the algorithm. So that means that we will say things because we want the algorithm to recommend our videos. And there's a few things that the algorithm will incentivize, right? It will incentivize using platform-friendly speech like "unalive" instead of "kill." It'll incentivize pushing memes and trends like I talked about with rizz and skibidi. It'll create in-groups and echo chambers — and again, humans naturally sort themselves into groups. This is a thing that we do. But algorithms push us into echo chambers, which has been well talked about. And inside these echo chambers, they're now incubators for new language formation. We now have all these people with shared interests and shared identities with a shared need to invent new slang. So they do. But the algorithms open up those echo chambers just enough to allow those words to spread across as broad trends. We have algorithms incentivizing attention-grabbing language because let's not forget that the function of social media — what these platforms are doing — is they are commodifying your attention so that they can sell you more ads and sell your data. That's what they're doing. They bake in those attention incentives into the structure of how videos get recommended. They push metrics like retention, which is how long people watch a video. They push likes and comments and shares. And so now me as an influencer and other creators, we all have to adhere to that. We have to find ways to get attention. So I think language is also evolving around what grabs our attention, which in some case it's always been true, right? But it's now compounded, and algorithms are pushing these trends faster and emergently creating more language than otherwise would exist. [00:14:29] Brian Mackey: You have to be a consumer to create the content for these algorithms, I take it. [00:14:35] Adam Alexic: Well, they operate under technofeudalist logic where the users are... [can you] break that down for us, yeah. [00:14:42] Brian Mackey: Yeah, yeah. [00:14:42] Adam Alexic: Technofeudalism is sort of this idea of how algorithms and platforms structure reality. The users are the product, the users consume the product and the users make the product. So I as a creator, I upload something, then somebody else watches it. And that's their entire model. Like, they just create the infrastructure for that interaction to take place. But in the meantime, they can reap the benefits of our data and our attention. And so basically we are like serfs toiling away at feudal land. That's a very important background to understand if you're trying to not only understand social media, but then understand how we communicate — that it all descends down from what these platforms want us to be talking about. [00:15:24] Brian Mackey: Is your best understanding that it's primarily about the money, or is, you know... are there other factors? [00:15:33] Adam Alexic: There's different levels at play, right? The platforms care about money. Now humans, we care about communicating and being funny and spreading ideas. And we are in fact incredibly tenacious at doing that. I'm not trying to paint this like the algorithms have the final say in what we do. In fact, the word "unalive" is a testament to how humans always come up with new ways to express themselves. And the word "unalive" is actually now also censored. People have turned to other phrases. You can say you "kermit sewer slide," or you can spell "unalive" with an @ sign or an exclamation point instead of an A or an I. There are always new ways of coming up with methods to express ourselves. And there's always like humans trying to communicate their stories. We always communicate those stories through the media that are available to us. And in this case, that's the algorithm. So it's a story told in multiple parts — what the platforms are doing and what the people are doing. [00:16:24] Brian Mackey: All right, we're gonna continue this conversation after a short break. We're listening back to my talk with Adam Alexic, author of "Algospeak: How Social Media is Transforming the Future of Language." This is the 21st show. Stay with us. [00:16:41] [MUSIC: "Supercalifragilisticexpialidocious"] [00:17:35] Brian Mackey: It's the 21st show. I'm Brian Mackey. Today we're listening back to my conversation on the evolution of language and how the internet, the social internet and vertical video, is changing the way we speak at an ever-increasing pace. This is why you hear young people using words like rizz or skibidi — or at least they did last year when we first broadcast this conversation. It's also why words like "cool" are not seen as slang anymore, at least not by most of us. All this and more is covered in the book "Algospeak: How Social Media is Transforming the Future of Language" by our guest Adam Alexic, who goes by @etymologynerd online. Since we're on tape today, no live calls. But we did hear from several of you by text when this first aired in summer 2025. Karen in Urbana said, "'Ain't' is a word that used to have its own associated rhyme: 'Ain't ain't a word, ain't ain't in the dictionary, and I ain't going to use it.' Spoiler," she says, "it's in the dictionary now." Also heard from Betsy in Rockford who said, "I never thought 'awesome' would survive, but here we are. Things are awesome." We also got this voicemail. [00:18:45] Caller: Good morning. This is Tana from Moultrie County. I hadn't thought about language evolving faster because of the internet. I can't intelligently — that is, go back to my WIU Tech, AKA WIU, linguistics course back in the 1960s. But for me, I love genealogy, and genealogy is just the study of grandma's stories of grandpa's stories and words going back a long way. So the study of words we use is just like the study of history, which is just like the study of people's stories from times past, which is just like — don't tell the kids this — but there is one word from my youth. I will be old next year, 80, that goes back at least 100 years to the 1920s and its meaning of good or great. When was the last time you said "cool"? That word is ubiquitous everywhere and apparently here to stay. These examples you gave and many others, I hope not so much. [00:19:43] Brian Mackey: Adam Alexic, talk about words with staying power like "cool" and how, you know, what's happening today may or may not follow that precedent. [00:19:53] Adam Alexic: Yeah, absolutely. And what a lovely analogy to genealogy. I've always felt that etymology is sort of the genealogy of language and history. And all this is very tied in — where ideas come from in the past, how they get remixed and adopted. This is sort of what we're talking about when we're also talking about how words stay, how trends and fads stick around. Versus, like, you look at evolution — some families might die out, some family lines might end. In the same way, some words might end. The fad ends. What happened with the word "cool"? I actually opened my sixth chapter with the word "cool" and the story there, because this starts in [the] 1880s in African American English, and it comes under resistance — also associated with cool temperatures. And that's the original idea, that it's a feeling of resistance, of subcultural kind of awareness of oppression. In the 1930s, we see Miles Davis and the general emergence of like cool blues. So we have like "Birth of the Cool," [Charlie Parker's] "Cool Blues." And cool jazz becomes an aesthetic, and it becomes cool to be cool. And the word "cool" literally spreads because it is cool. And you see these subcultural groups like the beatniks and the hipsters start talking about things being cool, and the word continues spreading. And then all of a sudden, "cool" is associated with fashion aesthetics of like wearing cool sunglasses, and the word is taken away from that original meaning of what it is to be cool. But it found a place because it describes literally this subcultural feeling of aura that people like. And the word "cool" spread along that. And you see time and time again that words spread along the conduits of what is seen as funny or what is seen as cool. This is also happening online today. A lot of words are coming again from African American English because it's seen as either cool or funny. A lot of words are coming from the manosphere, which can be both cool and funny. Now, to address like what makes a word stick or what makes a word die out — it's a really complicated answer. There's a lot of stuff going on. One is whether it fits a need in the language. We call that a lexical gap. If there is a... like, we needed a word for this concept. So for example, in 2013, the word "selfie" emerged, and that really fit the concept of "we needed something to talk about how we take photos facing ourselves." Around the same time, the word "yeet" emerged in Gen Z slang. And people don't really say "yeet" anymore. If they do, it's ironic. So "yeet" didn't stick around because it didn't have much of a need to stick around. At the same time, it was tied to the lifespan of a meme. When the meme dies out, the word dies out. If your grandmother starts saying "yeet," if your grandmother starts saying "skibidi," you stop saying these words because it's not cool. And it's cool because it's in the in-group of people in the know, right? And that's another thing — how much does a word stick out? If a word sticks out as an obvious example of a meme, it probably will not be around to last because then it dies out with that meme. "Selfie" was never thought of as a meme, although it still has a lifespan like any other word. Maybe hundreds of years from now we'll evolve past the word "selfie." But it stuck around because it wasn't seen as a meme and because it was easily re-adaptable and because it filled a need in our language. So that's sort of a long-winded explanation, but that's how words sort of stick around. [00:23:17] Brian Mackey: No, I like that. I wonder if you can use that sort of algorithmically, to borrow a phrase. This idea that "selfie" was there to meet — it met a need for a word that we didn't really have. Is there a predictive power in that when you're considering, you know, these slang words that are coming up today? How many of them, you know, if you had to roughly put a percentage on it, are truly rising up because there's some unmet need, I should say? [00:23:49] Adam Alexic: It'd be impossible to put a percentage on this 'cause linguists don't even have a definition of what a word is. It sounds funny to say, but we can't actually agree on that. We can't agree on what's a slang word, what's a regular word, how long a word is actually staying. It all gets incredibly subjective and hard to quantify here. It definitely is true that algorithms are pushing more memes than before, and we are very aware of the meme words, right? There's a lot of words that are more low-key, such as the adverb "lowkey," which was trending for a while. It really began emerging in the '80s but didn't really get popular until now in Gen Z slang. But [it] originally described this sort of muted aesthetic. And now you can say, "Oh, that's lowkey really cool," and I'm modifying [a verb] when I say that. So it's a new use of the word, but people don't think of that as a slang word. And that's gonna stick around because it kind of subtly wormed its way into our language and filled — not an obvious need, but it nevertheless satisfied like the people who are using this word in a way that they didn't see it sticking out. I think that word is gonna stick. And there's a lot of other small phrases under the radar. "[Darty]," [functions] as a synonym for a frat party. All these things are kind of new emergences, but we don't think of it as quote-unquote brainrot. I think the brainrot words like the rizz and skibidi are not going to stick around because they stick out far too much. We're too aware of them, and they're gonna die out with the word's lifespan. [00:25:19] Brian Mackey: Do you think that — I mean, does this mean that slang has, because of this rapid pace, [is] it less likely to stick around than it might have in the past, where things had to build a little more slowly? [00:25:32] Adam Alexic: It really depends on where we're drawing that boundary of slang. Some of it, absolutely. Like these words come and go so quickly because algorithms are pushing them, and they push the trend faster even once the word is popularized. And now your grandmother's gonna be saying "skibidi" a lot faster than she otherwise would. So that means the word "skibidi" is gonna have a two-year lifespan or something. They are pushing the words faster, which could cause them to both emerge and die out faster. However, at the same time, there's also all these under-the-radar words like "lowkey" that it's hard to even quantify how much words are changing. Sometimes it's just a shift in definition, a shift in vibe. All this is still being affected by algorithms. [00:26:14] Brian Mackey: Let's go down to the phones. Craig is calling from Northern Illinois. Craig, thanks for calling in. [00:26:20] Craig: Yeah, thank you for taking my call. I'm curious, you know, I've often recommended the book "Amusing Ourselves to Death: Public Discourse in the Age of [Show Business]," where he refers to the fact that every technology creates its own epistemology. And so this gentleman is working with a technology that creates a kind of worldview that's based on algorithmic computations. But language goes far deeper than just basically waking state or technological concepts. For example, the language of Sanskrit, which is one of the most ancient languages [in] the Indo-European language, it literally is conceived from a field of consciousness transcendent to the waking state of awareness. And with all this technology, what I find is that it loses its depth, it loses its connection to the [interior] because it's based on very finite algorithmic numbers. And since I've been doing lots of transcriptions of my relatives in the 19th century, language has a capacity to of course evolve and change. But with this new social media language, I'm wondering who they're gonna connect with two or three or 400 years from now. Will there be any historical cultural connection to the prior periods, the prior eras of human evolution and development? [00:27:56] Brian Mackey: Craig, thanks so much for the call. Appreciate it. Adam, I'll let you take that on. [00:28:01] Adam Alexic: Craig, I love the "Amusing Ourselves to Death" reference, and there's definitely something there that each medium distills an infinite amount of possibilities of what language could be into maybe a finite box. The same is true of other mediums in the past. If you're writing in... I just wrote a book. I had to adhere to formal English, which actually constrained my expression. When I had to talk about the adverb "lowkey" in my book, they made me put a hyphen in it, even though that's not how the word is usually used in slang discourse. So each platform and each medium is uniquely going to constrain how we speak. We're not just speaking on social media, we're also speaking off of social media. And each medium still has an immense power. I think social media right now — algorithms and platforms like TikTok particularly — are where ideas seem to be emanating out of in our culture. And we are getting more of our discourse coming from there because, or at least like, once it's brought up to TikTok, there's an effervescent ability to surface new trends and to push these trends that these platforms are doing. And so these are still the conduits of where our culture is being influenced from. At the same time, it bleeds offline and then it bleeds into other media. And each medium again has this unique method of expression. So it's definitely important to remember that, yeah, language reflexively evolves around the tools that we use to communicate with. But at the end of the day, how much are future generations gonna connect with this? There's this quote by Ralph Waldo Emerson that I always really like, that language is fossil poetry. And what that means is that every single generation contributes a new layer of these fossils of like words that were compelling to them, and then they get stacked on top of each other. I don't think that process is gonna end. Language is the history of us using different media. And we started out talking about tablets and we talked about oral tradition and all these different forms of media and the internet and television and radio — these also influenced language. And each of these forms of media does affect the kind of course of where words are coming from and how they're changing. But at the end of the day, we're still just adding new little fossils of poetry, which I think is pretty beautiful. And there will be something in this generation for future generations to look back at and say, "Oh, that etymology, that genealogy traces back to the TikTok era." [00:30:12] Brian Mackey: You know, I've been transcribing some of my own grandfather's World War II letters. Are future grandchildren of today's high school students gonna be going back and, you know, doing mashups of their grandparents' TikToks? Or I mean, does the, you know, how does the ephemerality... yeah, yeah. [00:30:33] Adam Alexic: There's more persistent mediums. And I think it's absolutely true that these platforms are not going to last forever. For example, we saw Vine, the video app platform, die out. A lot of Vines were lost. And that's something about the internet — that it does feel less permanent in the real world. You know, I always try to back up my stuff on hard drives because you never know. You never know when things are gonna be deleted, when you're just gonna lose access. And constantly information is being lost online. So this is definitely something to think about. Maybe we'll be able to play TikTok montages at funerals, but I somehow don't think so. I think it'll still be like the photos and the videos we can pull together. And some of that might be TikTok, but it's what is persistent, the mediums that continue carrying ideas. Nevertheless, the words that we're using [are] now crystallized into language itself. So that's not stuck on the TikTok medium. The fact that these middle schoolers are saying "unalive" and "rizz" — that's actually in our offline, infinite, expansive language. [00:31:36] Brian Mackey: So I guess maybe the object lesson here or the practical news you can use is like, set that inheritor for your iCloud account, right? There's — Apple has that setting where you can go in and say, you know, in case I die, in case I'm unalived, someone can access my account. A couple of minutes until we need to take a break. I wanna come back to this idea of censorship you talked about, and specifically, you know, some of these words that... OK, one is "retarded," right? This is once a mainstream term to describe people with developmental disabilities. And, you know, it was once an improvement on "moron" and "imbecile," which are earlier words that we used for people with those disabilities. But now it's so derogatory [that] some people only refer to it as the R-word. Yet here's Elon Musk trying to pull it back into common usage. Talk about what's going on there. [00:32:29] Adam Alexic: Steven Pinker terms this the euphemism treadmill — the idea that words become pejorated or take on a negative meaning, and then we need to find a new word to use [in] the same function. So the fact that imbecile and moron were genuine scientific classifications of intelligence in the early 1900s but then got turned into insults meant that we had to come up with the word "retarded." Then that became an insult. And then we turned to other phrases like "mentally challenged" or something like that. And these also took on their own negative coloration. That's always been a process, and again, this is something from the early 1900s. And there's always people in varying stages of reacting to that, thinking, "Oh, we should be using this word, we shouldn't be using this word." The fact of the matter is that words change vibes over time. What we do get is — I think algorithms amplify this sort of euphemism treadmill through literally what we can see with "unalive" and other examples. I really like the example of China. So in China, the word for censorship is censored. And people are very aware of this. They instead turned to use the word "harmony," which sounds something like [hexie]. And that started being censored. "Harmony" was an allusion to the Chinese government's goal of creating a harmonious society, so it was sort of a play there. But once the word for "harmony" also started being censored, because the word for "censor" was first censored, users then moved to the word for "river crab," which sounds similar to the word for harmony, [hexie]. And that kind of also started being censored, so people turned to the phrase "aquatic product." And what you see is it's kind of a game of whack-a-mole, right? The mallet comes down, this new word starts being censored, and a new mole pops up — [a] new form of human expression. And if anything, humans are constantly one step ahead of the algorithm. We're aware of what it's doing. And the word itself is a metalinguistic recognized symbol that we know this is happening. But at the same time, we find ways to express ourselves because that is a tenacious creative human impulse — that we find ways to talk about what we want to talk about. [00:34:31] Brian Mackey: All right, we need to take another break. We'll have more from Adam Alexic. His book is "Algospeak: How Social Media is Transforming the Future of Language." This is the 21st show. [MUSIC] It's the 21st show. I'm Brian Mackey. Let's get back to my conversation with Adam Alexic. His book is "Algospeak: How Social Media is Transforming the Future of Language." You can find him across social media. He's @etymologynerd. We first broadcast this in summer 2025. Since our program's on tape, we're not taking any calls. But you can let us know what you thought. Our email address is talk@21stshow.org. All right, so as a content creator, right, what do you think of algorithms? How have they changed your approach? [00:35:43] Adam Alexic: Well, exactly. As a linguist and as a content creator, I can't not think about this. I can't — I'm always noticing my language and how I'm rerouting my speech around these algorithms. And it started — my research started with words like "unalive," but I soon began to notice a lot of other things. Inflection patterns like the influencer accent. At the start of this segment, you played a clip of how I talk online, which hopefully sounds different than how I talk in person. I'll talk more quickly, I'll stress more words to keep you watching my video. And all of that is because I'm trying to get people's attention because I want the algorithm to push my content. And you'll see different forms of influencer accents emerge. There's a lifestyle influencer accent like, "Hey guys, welcome to the 21st show!" Sort of that [uptalk] characteristic of like Valley Girl English, but it's actually very good for retention because it makes it sound like something's always coming next and it fills dead air, which is really bad for algorithms. So these accents emerge because they are good at grabbing attention, and then they replicate online. And people start subconsciously speaking in that simply because that's the accepted way to speak online now. And so that's just one example, right? You see grammatical changes, dialectal changes, all these memes and trends and in-group language also [emerge] because of algorithms. [00:36:54] Brian Mackey: And we're even seeing this loss of accents and the emergence of online-specific accents that you just alluded to. Say more about that. [00:37:03] Adam Alexic: So, I mean, we've had regional accents dying out before the internet. So it's always a product of globalization. The Texan accent, for example — there's a study that came out in the last 20 years [showing it] declined by 60%. We have all these regional accents in England dying out. The southern accent's kind of on its way out, you know. There's a few strongholds, but it's been a thing that we're homogenizing the American accent as we globalize, and we're sort of trending towards similar speech. At the same time, I feel like some of that speech is being replaced with online speech because this is also now a place in which we communicate with one another. And there's all these in-group dialects that develop around different fandoms. Like, Taylor Swift fans will speak completely differently online in the Swiftie community, and K-pop fans will talk differently, and the manosphere will talk differently. And then there's also these like verbal accents that are different between influencers. So you see almost the replacement of offline variation in linguistics with online variation. [00:38:04] Brian Mackey: What insights would you have for like news media, right? Well, maybe we can turn this into a bit of a struggle session for us here. You know, exaggerated titles, fast-paced videos — this is what drives attention in modern social media. Like, talk about the disconnect between that and, you know, what I do when I was trained to do when I was cutting my teeth as a... [00:38:28] Adam Alexic: What you're doing right now is you have a radio accent, right? This is like — in NPR they make fun of like the NPR accent. On broadcast TV, "This just in!" People talk differently on air than they do with their families because there's a certain expected way to present through this medium. Influencers are just doing the same thing in a new medium. However, there are differences, of course. The sensationalism of the internet is absolutely something that maybe is amplified online. Unfortunately, I do think I see this bleeding into our real kind of media — "real" is, you know, also fake — but media in other contexts. So the New York Times, for example, AB tests all their headlines and they just go with the one that gets more clicks. That doesn't feel like neutral objective reporting to me. That feels like yellow journalism and sensationalism. But that's something that they have to do to compete with the social media landscape, where things are battling for your attention online. So unfortunately, we have more of a trend towards things that are really like in-your-face, meant to grab your attention. [00:39:30] Brian Mackey: Couple of other points from your book I wanna be sure we get to. One of those is that, you know, we talked a little about "cool" and the history of that, but a lot of new words come from African American English. Talk about that lineage. [00:39:43] Adam Alexic: Right. So we talked about how words follow the conduits of what is seen as cool or funny. And yeah, unfortunately, African American English was created as a way to differentiate — for among Black Americans to differentiate their speech from the white norms of the English language, because people do point to these like Oxford Dictionaries as the basis for what the English language is. But that doesn't really represent a lot of people. So they come up with language, especially in like, let's say, the ballroom scene in the 1980s. You'll have modern-day slang words like "slay," "serve," "ate," "bet," "yas" — all these are modern slang words, and yet they all come from that queer Black Latino space in New York City in the 1980s. And that happened because the space really had a kind of a shared effervescent cultural moment where they were coming up with new ways to build their identity and share that with each other. And then it was also seen as cool. It was also just this socially desirable thing among the liberal counterculture to start speaking more like ballroom slang. And it starts with, you know, Black and Latino gay people, but then it moves to white gay people. And then it moves to the straight white friends who are girls of gay men. So that's how these like words move across filter bubbles. That's a natural thing that eventually, just like the word "cool," words change context. But the word "cool" took maybe 50 years to even start hitting the mainstream. Ballroom slang got there a lot faster. [00:41:02] Brian Mackey: Yeah, yeah. So like, you know, at some point people are a fan of these cultures and they like the word, so they're doing that. And then somebody pulls out like, "Well, you're appropriating this culture." How do you think about that line? And I mean, is that a new trend as well? [00:41:19] Adam Alexic: No, no. I mean, the word "cool" was appropriated. And in linguistics, I wanna say the word "appropriation" just means taking from one context and using [it] in another. That's all it is. It sort of does have all these social implications outside of linguistics because of kind of the... yeah, the social implications that follow there. Like, what does it mean that you're using it in a different context? Like, are you removing power from that original community in which it was used? I mean, probably. Again, I'm not here to tell you what to say. I'm here to show what's happening, and you can make your own calls whether you think this is a good thing or a bad thing to incorporate into your vocabulary. At least once we are more aware of where words are coming from, we can be more conscientious in our language use. But yeah, appropriation is this literal linguistic process of words changing between social groups that's always been happening. [00:42:05] Brian Mackey: And I guess when I said "is it new," I guess I meant the idea of like pointing fingers and saying, you know, like I hung out with a bunch of musicians when I was in college in the '90s. Some of them were in the jazz studies program, and they would start using words like "cat" and "copacetic" as though they were, you know, in the '50s Greenwich [Village] scene or whatever. And, you know, I think nowadays people might say, "Ooh, that's cringe to do that," or worse, right? So yeah, I guess that's what I was asking. [00:42:34] Adam Alexic: There is more of that woke attitude, I guess, that doesn't like language moving across these bubbles and maybe more of a word policing than there used to be. But at the same time, there is still like regulatory functions in in-group language. Like if you tried to say the word "slay" to a ballroom queen in New York City in the 1980s, they would probably look at you funny. [00:42:57] Brian Mackey: Yeah. [00:42:57] Adam Alexic: It's just that there's no regulatory mechanism online. [00:43:01] Brian Mackey: Talk about the emergence of "core" words, right? What is "core"? [00:43:05] Adam Alexic: Yeah. Well, "-core" is a suffix that really emerged in 2021, 2022. It was circulating on Tumblr before, but as we see, algorithms popularize trends from previous platforms and previous memes in new ways. And so "-core" describes a lifestyle. You could append it to anything — cottagecore, goblincore, angelcore. These are not only like fashion aesthetics, but they're marketed as entire lifestyles. And there's cottagecore clothing and cottagecore music and cottagecore decorations for your home. And what these are is they're more than, you know, simple labels. Once a label's out there, you either identify with it or against it. And the algorithms like attaching labels because now they have more ways to categorize you. They have more ways to recommend you more content, more ways to target you as a consumer. And I examine in the book, "Algospeak," how they push these cores because they are good for that purpose. Like they're not even trying to hide it. The TikTok business page literally claims that subcultures are the new demographics and gives businesses ideas for how to profit off of cottagecore. And that word "demographic" is interesting because the demographic in the past is like race, age, gender. Now it's also whether you're cottagecore or not. So "-core" to me is a signal of the increasing kind of commercialization of our labels and how algorithms are pushing identities — are manufacturing them really — because you get a cottagecore video, you identify with it, it feels good to get something that you think belongs to you. You're like, "Wow, the algorithm really knows me." But the algorithm kind of manufactured this in the first place, or circularly did with actual people emergently creating a new identity that's then easy for them to make money off of. And isn't it convenient that cottagecore clothing is one click away on the TikTok Shop? [00:44:46] Brian Mackey: Isn't it convenient indeed? Well, there's a lot of people who talk about regulating algorithms or regulating these social media companies. Do you see any appetite, any danger? How do you think about those possibilities? I mean, TikTok is, I think, if I understand it correctly, it should be banned in the United States right now by law, or at least downloading of the app, even though the administration is kind of ignoring that law. How are you thinking about that? [00:45:15] Adam Alexic: Yeah, whatever is happening with TikTok, it clearly still is around. It's true that platforms are just allowed to do whatever they want. They have carte blanche. There's Section 230 of the 1996 Telecommunications Act which says that basically platforms are not liable for their users' speech, and also platforms can impose whatever regulations they want. So what that means is that they can just decide what speech they want on the platforms, not subject to government regulation. This doesn't count as free speech or not if they decide to censor something. So they often censor for commercial priorities. YouTube [censors] to have language that can be monetized, for example. And they'll demonetize creators. And as a result, like creators tend to use more corporate-friendly, sanitized language. TikTok will censor language that it doesn't like as well. So this is sort of a trend we see that the platforms have control over the general speech, although I will say that people are very inventive with finding ways to express concepts. So it's again — this is not some Orwellian scenario. But the platforms do have an inordinate amount of power, and small platform tweaks can really change what content we're seeing and what messaging we're getting. In January of 2025, for example, Meta loosened a lot of content guidelines on Instagram and Facebook. They allowed for more AI content. And what that means is that since January, there's been way more racist AI slop on Instagram reels than I had ever seen before in my entire time on social media. And it's because they simply tweaked some parameters because Donald Trump got elected and they had this [MAGA] rebrand. And that's now changing the actual content and messaging we're getting. [00:46:54] Brian Mackey: You say it's not Orwellian, but it sounds at least dystopian, right? I mean, Orwell had some specific ideas about censorship and thought control, but it doesn't sound great. [00:47:05] Adam Alexic: I don't think they're doing thought control. Like, they're clearly representing a different version of reality which may influence kind of our headspace and our thoughts and our consensus. But we are still able to think things, and we are still able to express things, which the idea in "1984" was that you can't even think and express these things. [00:47:22] Brian Mackey: Yeah. Well, we have always been at war with [Eastasia]. Adam Alexic is a linguist. You can find him across social media at @etymologynerd. He's also author of "Algospeak: How Social Media is Transforming the Future of Language." That's all the time we have in our program today. Coming up on Monday, this past January marked five years since a mob attacked the U.S. Capitol building. Scholar Laura K. Field has spent years researching what led up to January 6th and the intellectuals who have aligned themselves with President Trump. Her book is "Furious Minds: The Making of the MAGA New Right." The 21st show is produced by Christine Hatfield and Jose Zepeda. Our digital producer is Colson Kahn. Technical direction and engineering from Jason Croft and Steve Mork. Reginald Hardwick is our news director. The 21st show is a production of Illinois Public Media. I'm Brian Mackey. Thanks for listening.

Today's show included a rebroadcast of the following "best of" segment first aired July 23, 2025: From 'cool' to 'selfie' to 'slay', linguist's book takes a look at the evolution of language through lens of social media.